No Harm Intended

The awards ceremony wasn’t broadcast live. The BBC aired it with a two-hour delay, meaning this was not an unfiltered moment that simply “happened” to viewers. It was an edited broadcast. Edited broadcasts are not neutral; they reflect human judgment, priorities, and power. Both the original and the aired version had "inappropriate shoutouts.” Producers cut Akinola Davies Jr., saying “Free Palestine,” making it unmistakably clear that editorial intervention was underway. Choices were made. Among those choices, the N-word shouted at two Black actors remained. That is not neutrality. That is a decision, and decisions like that deserve scrutiny.

Was it malice or incompetence?

Holding the Line @ Backchannels

I’m joining 4S Backchannels—the Society for Social Studies of Science blog for shorter, timelier, media-rich STS scholarship—and my first piece is live: “Holding the Line: Values Drift, AI Anomia, and the Craft of Accountable Leadership.” 👇

When Defaults Decide

The show’s craft is a lesson in governance: a single camera that never cuts—like a business model built for uninterrupted engagement. In that world, safety is an interruption. When adults outsource judgment to platform defaults, harm isn’t exceptional; it’s ambient.

What If AI’s Mistakes Aren’t Bugs, But Features?

We often say AI’s mistakes are "by design," but they’re really not. AI wasn’t built to fail in these specific ways—its errors emerge as a byproduct of how it learns.

But what if we actively use them as a tool instead of just tolerating AI’s weird mistakes or trying to eliminate them?

Here are some unexpected but potentially valuable use cases where treating AI mistakes as a form of bias—rather than just failure—could lead to new insights and innovations.

AI’s Newest Employee: Who Bears the Burden of Your Digital Coworkers?

Digital coworkers are no longer hypothetical. AI-driven agents—agentics—are creeping into every function, every decision process, and every interaction within organizations. In some ways, they are the executive dream—they don’t need coffee breaks, demand raises, or call in sick. And yet, they’re reshaping work in ways few leaders are prepared to handle.

A student declaration of rights: Protecting Education in the Age of AI

Today, I'm sharing news of an initiative occupying my thoughts and research: the Global Student & AI Rights Pledge & Declaration. These are two initiatives I architected as part of presentations I gave this year at ICTE 2024. But before I detail these efforts, I want to emphasize something important: this isn't just another policy document destined to gather digital dust in some institutional repository.

When “Privacy” Means Permanent Surveillance

His words are chilling in their simplicity: “Oracle, I need two minutes to take a bathroom break … The truth is, we don’t really turn it off. What we do is, we record it, so no one can see it … we won’t listen in, unless there’s a court order.”

Data Governance Best Practices: Lessons from Anthem's Massive Data Breach

In the insurance industry, data governance best practices are not just buzzwords – they're critical safeguards against potentially catastrophic breaches. The 2015 Anthem Blue Cross Blue Shield data breach serves as a stark reminder of why robust data governance is crucial.

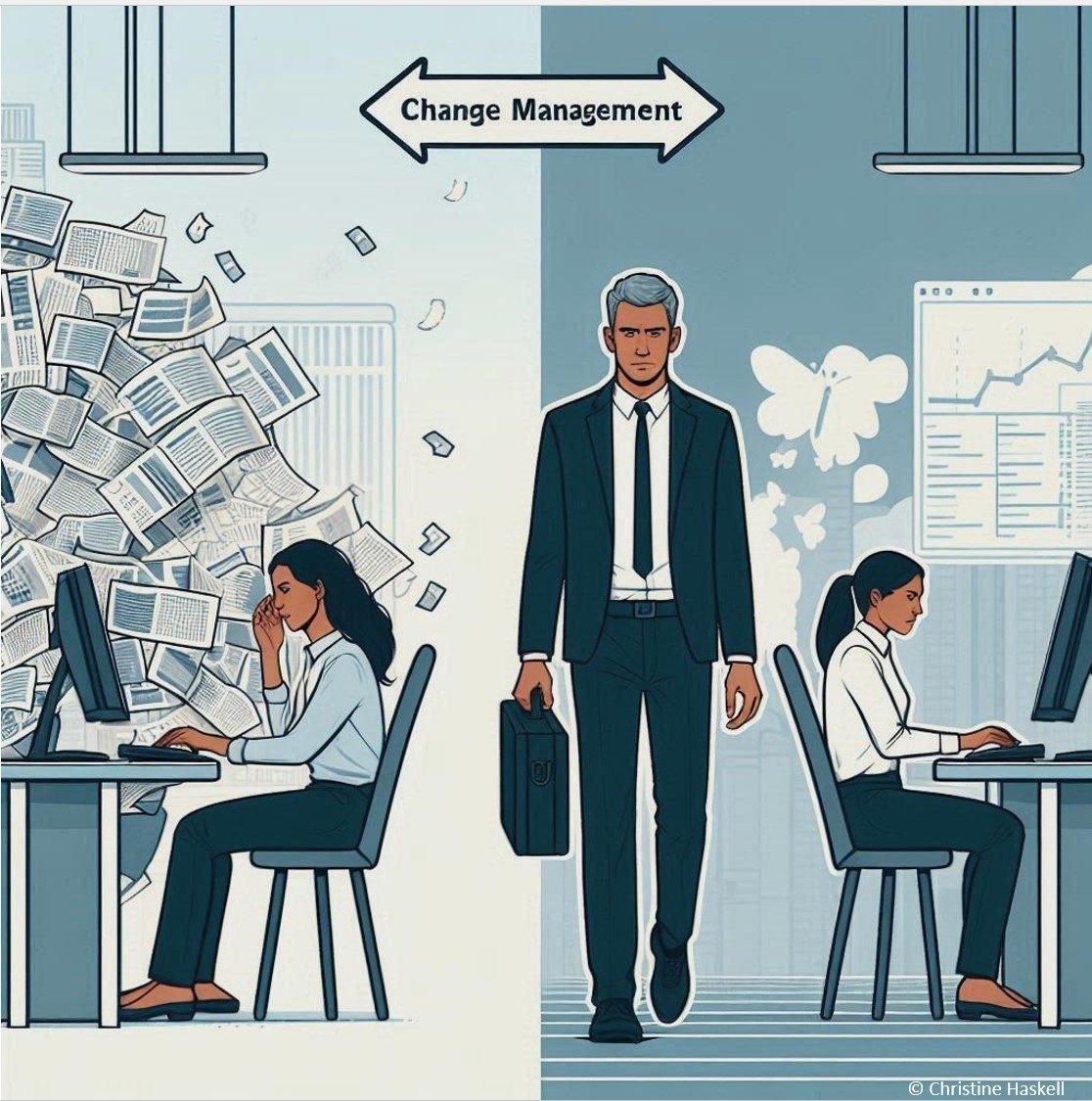

Change Management in Data Projects: Why We Ignored It and Why We Can't Afford to Anymore

For decades, we've heard the same refrain: “Change management is crucial for project success.” Yet leaders have nodded politely and ignored this advice, particularly in data and technology initiatives. The result? According to McKinsey, a staggering 70% of change programs fail to achieve their goals. So why do we keep making the same mistake, and more importantly, why should we care now?

Where Business Meets Technology in the Marketplace of Information

Real estate focuses on governance and value derivation from assets. The Marketplace facilitates value-driven transactions while maintaining order, quality, and trust throughout the ecosystem. BOTH must work TOGETHER.